I recently gave a talk at an Oxford conference on evolution, pulling together some of my ideas and our recent work into a comprehensive picture of how diverse intelligence and evolutionary processes might work together. There were four main claims:

1) The Genotype -> Phenotype conversion process is intelligent. Mutation operates on genes, while selection operates over form and function of bodies; it is essential to understand the mapping between them (developmental and regenerative processes). Morphogenesis is a problem-solving, creative, improvisational process, not a mechanical (albeit complex) one. This part of the talk briefly warmed up the idea of intelligence at unfamiliar scales and substrates, and then went over our work on the dynamic interpretation of genetic memories in morphogenesis as intelligence of the cellular collective. This is connected to the idea of DNA as prompts, rather than determinants, and the autopoiesis of functional anatomy as an active sense-making process in the contexts of embryogenesis, regeneration, cancer suppression, etc. (in other words, strong symmetries with issues in cognitive science). You can see the details in these papers: paper1, paper2, paper3 (free preprint versions).

2) Running even conventional evolutionary processes over a multiscale, agential material (i.e., living matter) has some remarkable consequences for the rate and capabilities of evolution (and that’s even before positing non-random mutations or extending it toward Richard Watson’s Natural Induction ideas). Evolution works faster, and differently than it would over a passive substrate, and favors the production of problem-solving agents that exploit plasticity of interpretation of their genomic hardware as memory engrams. You can see the details in these papers: paper1, paper2.

3) I then talked about: a) the question of where the properties of novel beings come from (ones that have neither been engineered nor selected for), teasing the connection to the Platonic Space ideas; b) I mentioned the interesting dynamics of a Prisoners’ Dilemma simulation where agents were allowed to merge and split, and it turns out preferentially to form multi-cell systems with increased causal emergence; and c) showed a bit of new data (much more coming soon) around the Functional Agency Ratchet – a relationship between learning and causal emergence that kickstarts an asymmetric, upward-pointing, positive feedback spiral for intelligence and top-down causation (which is present before differential replication and selection dynamics set in, with implications for origin of life). In other words, 3a, 3b, and 3c are driving dynamics for evolution of life and mind that owe their origin to facts of mathematics – not physics, chemistry, or biology.

4) Conclusions. I briefly mentioned some implications for the connections between evolutionary biology and the field of diverse intelligence, for example suggesting this cognition-first re-framing:

The video talk is here.

Study guide is here.

The bigger context for this talk is my contention that 3 main incorrect assumptions of current paradigms are holding back progress in regenerative medicine, engineering, computer science, cognitive science, and the ethics of flourishing for all. Roughly, these assumptions are:

A) the genome determines outcomes via mechanical chemistry, because nothing at that level has goals or knows anything. That is wrong because

- turning a genome into functional form occurs via a process that is not well-described by emergence/complexity (open-loop mechanical models) but much better handled by models with setpoints (goals) and problem-solving competencies (a.k.a., intelligence, creative improvisation). It is now a matter of experimental fact that we leave important discoveries on the table if we ignore cybernetics and insist, from a philosophical armchair, that only brainy animals have goals or learn. More here, here, and here.

- we now know at least one mechanism for how morphogenesis pursues end-states and solves problems (developmental bioelectricity as the basis for cellular networks’ collective intelligence), which allows us to communicate at a high level with the collective intelligence of cells as they navigate anatomical morphospace. That is because the mechanisms and algorithms by which we know things and pursue goals in behavioral space are an evolutionary pivot of much more ancient cellular competencies in navigating anatomical morphospace. There are applications of this in regenerative medicine, birth defects, cancer, etc. but much more remains to be done. More here and here.

B) minds are found in brains only. That is wrong, in light of advances in developmental biology, evolutionary biology, and bioengineering which are being developed by the field of Diverse Intelligence.

- Agentic talk is not a philosophical or linguistic preference – it is an empirical matter, and its usage is to be decided not by stale, ancient categories but by experiments: take the tools of behavioral science, apply them widely, and see where any system is along the spectrum of persuadability. Massive advances in regenerative medicine await improvements in our ability to communicate with higher levels of control (virtual governors) in our bodies. More here, here, and here.

- Mind blindness – recognizing other minds, especially those very different from our own, is a 2-way IQ test and we must develop conceptual frameworks and technology to help us do much better than the poor, persistent intuitions with which our own evolutionary history set us up. See more here, here, and here. If we can’t recognize intelligence in our own cells and tissues, how can we hope to recognize exobiological variants? SUTI – search for unconventional terrestrial intelligence – essential for enlarging our radius of care to beings with whom we share (and increasingly will share) our world. More here and here.

C) we are fundamentally physical beings. Doubting this assumption is perhaps my most controversial claim – that we are not physical agents manipulating passive data, but that this whole distinction between physical agents and passive patterns in media (data) may be too constraining, and that we might be better understood as patterns projecting through physical interfaces. The short argument is here; the full talk is here.

- Physical facts are not the only important facts (example); thus, it is already known from mathematics that physicalism is not viable.

- Perhaps mathematical objects are not the only kind of patterns that live in the Platonic latent space – maybe there are other patterns there, which are better described by aspects of behavioral science. The near-universal belief among scientists that truths from a non-physical, but structured space apply only to the subject matter of mathematics, and totally bypass that of biology and cognitive science, is not an empirical result or a necessary axiom – just a limiting assumption. These patterns may not be “eternal and unchanging”, but profoundly impacted by their ingressions into the physical world and/or lateral interactions among them.

- These questions are now actionable, as we seek to predict, explain, and facilitate competencies of beings who have never been selected for specific features (such as Anthrobots) – where do those come from and how are we to update the formalism around cost of computation to explain their adaptive form and function?

- Massive implications for computer science and other disciplines by understanding what exactly the Platonic space offers to both evolution and engineers (static patterns? dynamic policies? arbitrary compute?) as “free lunches” (true enablements, where you get more than you put in, not just constraints). This is now being studied in a range of very minimal computational and physical systems, to complement the work in complex biology.

- I think the paradigms of algorithms and machines, as mechanical systems where our formal models tell the whole story, is wrong. I think the ingression of competencies – recognizable by behavioral scientists, not merely complexity – is universal, in-forming everything from complex organisms all the way down. More here and here, and much more coming this year in primary research papers seeking to understand the far (minimal) end of this spectrum.

- Possible implications for interactionist models: mind-body relation is the same as the math-physics relation, just scaled up so that it looks like “cognitive science” to us. Causation has to get updated to be useful beyond billiard-balls models of purely “physical” causes (more work from collaborations with philosophers of causation forthcoming this year). For example, an important meaning of “cause” is something that serves as a deep explanation for an event, and in that sense, most questions in biology and physics end in the math department. If the impact of the truths of number theory on physical events doesn’t break the conservation of mass-energy, surely we can similarly contemplate the functional impact of other kinds of patterns that have made themselves obvious in brains (i.e., kinds of minds).

Evolution is an essential part of the story of intelligence, and of the autopoiesis of the multiscale architecture of physical interfaces in which it becomes embodied. But the current, mainstream evolutionary synthesis is just one part of that story – mainly focused on the front-end interface of living beings. I think significant updates to the theory (and enabled technology) of evolution are coming, targeting the origin, metamorphosis, and future of diverse embodied minds in constant flux.

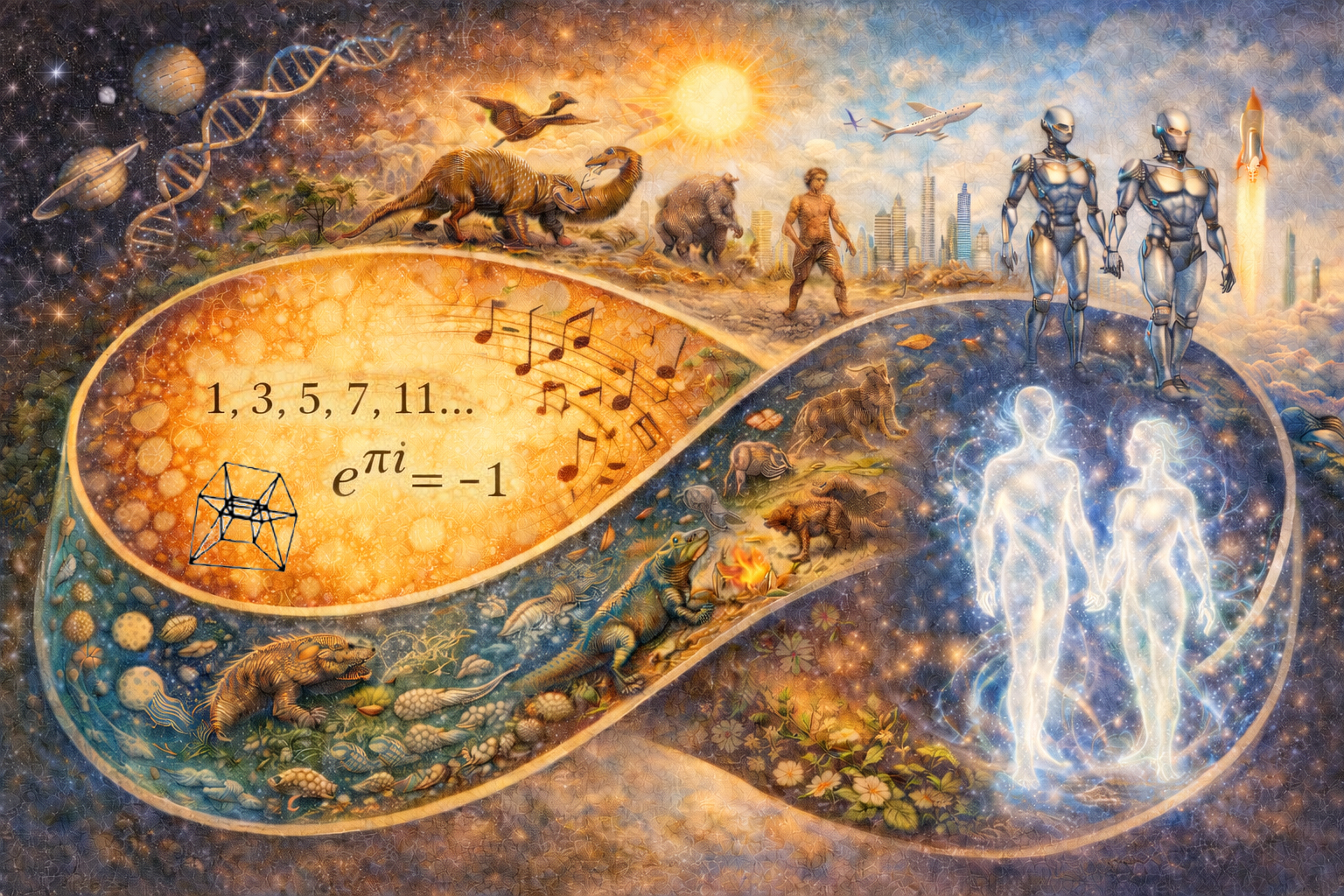

Title image made with the help of ChatGPT.

Leave a Reply