What is the relationship between learning and the degree to which an agent is a coherent, integrated, causally important whole that is more than the sum of its parts? Here I briefly describe work with Federico Pigozzi, a post-doc in my group, and Adam Goldstein, a former graduate student, shown in a recent preprint and the final official paper here.

First, recall that we are all collective intelligences – we’re all made of parts, and we’re “real” (more than just a pile of parts) to the extent that those parts are aligned in ways that enable the whole to have goals, competencies, and navigational capabilities in problem spaces that the parts do not. As a simple example, a rat is trained to press a lever and get a reward. But no individual cell has both experiences: interacting with the lever (the cells at the palm of the paws do that) or receiving the delicious pellet (the gut cells do that). So who can own the memory provided by this instrumental learning – who associates the two experiences? The owner is “the rat” – a collective that exists because an important kind of cognitive glue enables the collection of cells to integrate information across distance in space and time, and thus know things the individual cells don’t know. The ability to integrate the experience and memory of your parts toward a new emergent being is crucial to being a composite intelligent agent.

It’s pretty clear that an agent needs to be integrated into a coherent, emergent whole to learn things that none of its individual parts know (or can know). But, does it work in reverse? Does learning make you more (or less) of an integrated whole? I wanted to ask this question, but not in rats; because we’re interested in the spectrum of diverse intelligence, we asked this question in a minimal cognitive system – a model of learning in gene regulatory networks (see here for more information on how that works). To recap, what we showed before is that models of gene-regulatory networks, or more generally, chemical pathways, can show several different kinds of learning (including associative conditioning) if you treat them as a behaving agent – stimulate some nodes, record responses from another node, and see if patterns of stimulation change the way the Response nodes behave in the future, according to the principles of behavioral science. For example, a drug that doesn’t have any effect on a certain node will, after being paired repeatedly with another drug that does affect it, start to have that affect on its own (which suggest the possibility of drug conditioning and many other useful biomedical applications).

Biochemical pathways like GRNs are one of many unconventional model systems in which we study diverse intelligence by applying tools and concepts of behavioral science and neuroscience, to better understand the spectrum of minds and develop new ways to communicate with them for biomedical purposes. So, we know we can train gene regulatory networks, but do they have an emergent identity over and above the collection of genes that comprise them – is there a “whole” there, and if there is, how does training affect its degree of reality (the strength with which that higher-level agent actually matters)?

Whether a system can be more than the sum of its parts is an ancient philosophical debate. But now we have metrics of this – causal emergence and other mathematical ways to estimate this for a given system (see references in the manuscript, and here – a paper written with one of the key developers of this important new advance, Erik Hoel). So now we can ask rigorously: when something learns, what happens to its causal emergence?

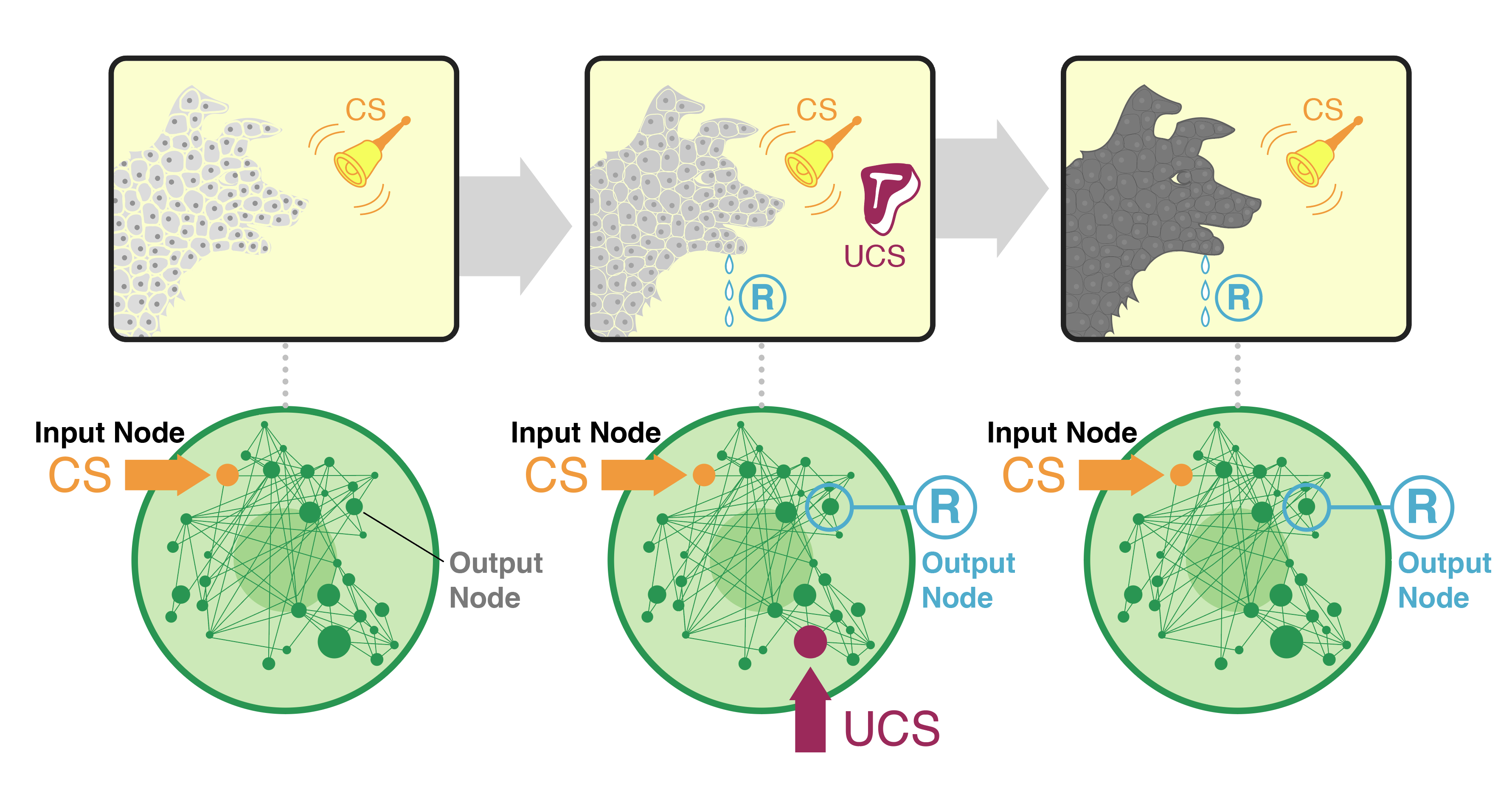

This diagram illustrates the basic setup. In the top row we show a classic Pavlovian type of experiment – associate salivation (brought on by exposure to meat) with a bell (which normally does not cause salivation – the conditioned stimulus (CS) which starts off as the neutral stimulus until it’s paired with the meat). The top row of panels schematizes our hypothesis: that the agent becomes more real (not just a collection of cells but an integrated whole that is more than the sum of its parts – thus the solid darker color and less space between the cells), due to the training that causes it to integrate information across modalities and across time. How we actually test it is shown in the bottom row: we take dozens of available parametrized gene-regulatory network models from real biological data, and stimulate them in a Pavlovian paradigm. We choose nodes already identified in prior work as being able to support associative learning, We stimulate them in the way that causes a neutral stimulus to become a conditioned stimulus, and we measure causal emergence of the network before, during, and after that training.

here’s an example of what we see, in a figure from the manuscript made by Federico:

The Y axis indicates the degree of causal emergence. What can be seen here is that the network in the top row has low causal emergence during the initial stage (in its initial, naive state), something starts happening during the training, but the causal emergence really takes off after the training: in future rounds, when it comes across the stimuli it’s been trained on, it really comes alive as an integrated emergent agent. I’ll just mention two of the interesting points from the paper:

First, note that the causal emergence drops between the stimuli. It’s not that the network goes quiet – we checked, there’s just as much activity among the nodes during those times. But mere activity of parts is not the same thing as being a coherent, emergent agent. That collective nature seems to disappear when we’re not talking to it. It’s almost like, this system is too simple to keep itself awake (and stay real) when no one is stimulating it. It relies on outside stimulation to bring the parts together for the duration of the interaction. Between instances of stimulation, the emergent self of the network drops back into the void (the collective intelligence disbands, even though the parts have not quieted down, when not interacted with by another active being). There’s something poetic about that, and we will eventually find out what would have to be added to such an agent to enable it to keep itself awake. Recurrence is not it – these networks are already recurrent.

Second, the way in which causal emergence changes after training is not the same in all networks. There’s a lot of variety. But, remarkably, that variety is not a continuum of all the uncountable ways the time profile of a variable could change. It turns out that there are really five distinct, discrete types of effects! here is the t-SNE plot of the 2D embedding Federico made:

It’s pretty wild that there are, naturally, a small, discrete number of ways that training can affect causal emergence, and all the networks we looked at exhibit one of these ways. This allowed us to classify networks into 5 types and this classification doesn’t match other known ways that networks have been distinguished in the past. Apparently the effect of training on causal emergence is a new aspect with respect to which networks can now be classified.

So what does all this mean? For the field of diverse intelligence, this adds another unconventional model that can be used to understand collective beings and the factors that affect how much a system is an emergent whole. It confirms that metrics like causal emergence are not just for brains, but suggests interesting experiments in animal and human subjects to look at causal emergence metrics in brain signaling during and after learning. For biomedicine, we are pursuing a number of implications of this set of findings for managing disease state and development-related GRNs with stimuli that coax desired complex outcomes, and of course for cancer as a dissociative identity disorder of the somatic collective cellular intelligence.

One final thing: metrics of causal emergence have been suggested to be measuring consciousness. If you’re into Integrated Information Theory as a theory of consciousness, then there are some obvious implications of the above data. Now, I’m not saying anything about consciousness here (not making any claims about what this means for the inner perspective of your cellular pathways), but we can think about this as one example of the broader effort to develop quantitative metrics for what it means to be a mind (that is nevertheless embodied in a system consisting of parts that obey the laws of physics). For the sake of the bigger picture in philosophy of mind and diverse intelligence research, let’s do some soul-searching (pardon the pun). Obviously a lot of people will balk at the idea that molecular networks (not even cells!) can have a degree of emergent selfhood that is on the same spectrum with humans’ exalted ability to supervene on the biochemistry in our brains. But, these measures of causal emergence are used in clinical neuroscience to distinguish for example locked-in patients (who can’t move but nevertheless “there’s someone in there”) from coma or brain-dead patients (who are a set of living neurons but not a functional human mind).

So, what do we do, in general, when such tools find mind in unexpected places? Neuroscientists are developing methodology – think of it as a detector that tries to rule on any given system with respect to whether it has a degree of consciousness. What happens when those tools inevitably start triggering positive on things that are not brains (cells, plants, inorganic processes, and patterns)? One move would be to emphasize that there are ways to stretch tools beyond their applicability – maybe that’s what this is – using tools appropriate for one area to give misleading readings in an area in which they are not meaningful. Maybe… But we need to be really careful with this. First, because calling “out of scope” every time your tool indicates something surprising is a great way to limit discovery and insight. For example, that kind of thinking would have sunk spectroscopy, which revealed that earthly materials are to be found in celestial objects. In any case, if one rules these tools inapplicable to certain embodiments, one has the duty to say why and where the barrier is: what kinds of systems are illegal fodder for these kinds of computational mind-finding methods and why? If one makes this kind of claim, one needs to specify and defend the boundary of applicability and show why maintenance of that boundary is helpful.

The other way to go is to realize that, like with spectroscopy and many many other discoveries, the purpose of a tool is to show you something you didn’t know before. Something that seemed like it couldn’t be right, but then you found out that the tool is actually fine, it’s the prior assumptions that need to go. We’ve learned from physics that one of the most powerful things that such tools (conceptual and empirical) can do is lead us to new unifications. That is, things that you thought were really different turn out to be, at their core, the same thing in different guises. Magnets, electrostatic shocks, and light – when tamed with good theory and the tools it enables – not only turn out to be aspects of the same underlying phenomenon, but also opened us to the beauty and utility of new instances of it that we never knew about (X-rays, infrared light, radio waves, etc.).

My personal bet is that the application of tools developed by the neuroscience and consciousness studies communities to unconventional substrates is of that kind, and we are studying lots of new examples of biological (and other!) systems using these methods – stay tuned for much more. I think these kinds of approaches are, like detectors of different kinds of electromagnetic signals were, a way to expand past our native-mind-blindness and develop principled ways to relate to the true diversity of others. We will eventually get over our pre-scientific commitments and ancient categories, and use the developing tools to help us recognize diverse cognitive kin.

In the meantime, we could take a cue from the story of Pinocchio, who wanted to be a real boy and was (presciently) told that this would require a lot of effort in learning (both at school, and by the environment; a future blog post will discuss learning vs. being trained, and how one can tell the difference).

GRN training in general shows us that no matter how minimal, deterministic, and simple you may appear, there are likely surprises in store which enable you to learn from experience and raise yourself up, out of the mere mechanical parts of which you are made. Our new results in GRNs can be (very) roughly summarized as: whatever you are, if you want to be more real, learn.

All graphic images made by Jeremy Guay of Peregrine Creative. Data images made by Federico Pigozzi for the manuscript.

Leave a Reply