One of the key implications of my TAME framework is that intelligence can be found in surprising places: we are not yet good at predicting it, and need to do experimental work to ask: where do various unconventional systems (especially cells, tissues, etc.) land on this spectrum of persuadability:

One useful and interesting thing about a Diverse Intelligence approach is that it encourages us to test out tools and concepts from other disciplines, especially those focused on understanding behavior and cognition, to look for improved prediction, control, and invention of various unconventional systems. For example, right now molecular medicine treats gene regulatory networks and protein pathways as low-level machines, to be micromanaged by forcing specific signaling states via drugs targeting the nodes. But what if they had more advanced computational capabilities, and could be managed by taking advantage of their ability to change future behavior in light of past experience? The implications of this are discussed here and here.

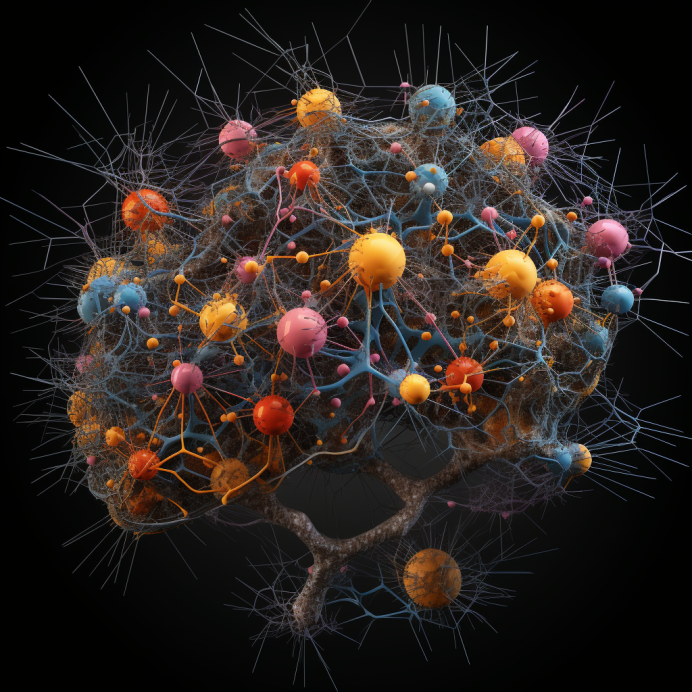

So, what proto-cognitive competencies might gene regulatory networks (GRNs) and other kinds of chemical pathways have? Initially, this seems unlikely; after all, they are modeled as simple, deterministic, systems in which a few nodes turn each other on and off (or up and down) according to clear rules. There is no room for magic and no hidden variables or mechanisms; surely they are not capable of anything like learning? But, in keeping with TAME (and its assertion that any assignment of a level of intelligence to a system is largely a statement about our own discernment), we decided to test that assumption: treat the pathway as if it was an agent and try the various tools of behavioral testing. Some of the nodes are treated as inputs (sensory pathways) and others as outputs (behavioral effectors), and we can test things like habituation, sensitization, anticipation, association, etc.

When we did, we found several different kinds of learning, in both Boolean and continuous pathway models. Interestingly, these memory types (including Pavlovian conditioning) occur much more frequently in real biological models than in random ones. One key feature though is that there is no synaptic plasticity here: the network is totally fixed. The connections between the nodes (its topology) does not change, nor does the strength of the weights (the rules governing how the nodes control each other) – the physical structure of the network is totally fixed during the training and subsequent testing process. What does change are the values of all the node activities at any specific time, as signals propagate through the network (its physiology), and it gets chased into new attractors that capture a different likely path for specific inputs.

This raises a key question. Given that the structure of the network doesn’t change during training, where is the memory? Where is the experience of the trained network stored, given that there is no explicit memory medium to store the engrams and the structure of the network does not change in light of its experience? Or asked another way, if the structure doesn’t change, then would it even be possible to distinguish a naive network (pre-training) from a trained one? What kind of observations would you have to make, to be able to look at a network and read its mind – know what it had learned in its prior history, given that the structure of the network is fixed and not different before and after? What else would you need to do, and can you read its content without interacting with it (stimulating it to see what happens)? This is a parallel concept to the idea of neural decoding: can we look at a brain (scanning it however needed) and decode the cognitive content of the individual? We can use these pathway models as a simple toy model to explore the notion of memory, and the difference between accessing engrams in first person (the being in whose cognitive medium the memories are formed) vs. in third person (external attempts to decode another being’s mind from the outside).

It may not be possible to know what memories a trained pathway contains from pure observation, but you can test the system with stimuli and get a clear answer based on its behavioral outcome. So, what kinds of systems cannot be decoded with pure observation – which ones need to be functionally interacted with, in order for an observer to be able to understand what information they store? Here is a video of Richard Watson, Chris Fields, and I discussing this question; they had many interesting ideas:

In the end, here’s what I think is going on. Some systems cannot be decoded (understood, controlled) by passive inspection, but only by interacting with them functionally – by entering a sort of entangled dance together where you stimulate/signal to them and see what happens. In a way, it’s a kind of a Heisenberg uncertainty-like effect, where you can’t get all the information you need without disturbing (changing) the system. The surprising thing is that this property shows up very early on the spectrum of agency – in very simple systems; you don’t have to have a complex brain to have an internal perspective: by the time you have chemical pathways, this is already in place. Maybe we need a parameter – a number to express the degree to which a system needs to be interacted with to truly understand it. GPT-4 made the following suggestions for naming this quantity:

- Interospecivity:

- Root: Derived from ‘Intero-‘ (inside or internal) and ‘-specivity’ (related to observation).

- Meaning: The degree to which one must observe from within or via interaction to understand an agent’s thoughts.

- Dialognosia:

- Root: From ‘Dialog-‘ (converse or interact) and ‘-gnosia’ (knowledge).

- Meaning: Knowledge of an agent’s inner thoughts that can only be attained through dialogue or interaction.

- Actoceptive Index:

- Root: ‘Acto-‘ (derived from action or activity) and ‘ceptive’ (related to perception).

- Meaning: A measure indicating the extent to which comprehension of an agent’s thoughts requires action-based perception.

- Interactendency:

- Root: From ‘Interact-‘ (between or mutual action) and ‘-endency’ (a disposition or inclination towards).

- Meaning: The inclination or tendency for an agent’s inner cognition to be understood primarily through interaction.

- Engagivity Coefficient:

- Root: ‘Engage’ (to interact or involve) and ‘ivity’ (a state or condition).

- Meaning: A coefficient representing the state in which understanding an agent’s cognition is dependent upon engagement or interaction.

I haven’t picked one yet. Meanwhile a few miscellaneous thoughts:

- The whole “you can’t tell what memories it holds without interacting with it” aspect is very compatible with the more general point of TAME with respect to agency, intelligence, and overall cognitive level – that these things can’t be judged from purely observational data. That is, by watching things happening you can’t tell how much competency is under the hood – you have to do perturbative experiments where you confront it with novel challenges to test hypotheses about what it’s measuring, remembering, learning, optimizing, etc.

- What if the agent gives you a written note about what they are thinking – isn’t that a case when you can access their memories with pure (noninvasive) reads? Yes, and this is a metric of the system wanting to communicate – a narrow-bandwidth channel that works like Huygens’ Shelf to help discordant oscillators find a common resonance. Perhaps high-level agents that want to make it easier to share thoughts (states) with each other can use communication through a narrow medium with low privacy coefficient (one that can easily be read out by 3rd person observations) to help each other solve the privacy problem. Note that in doing this, they are effectively making it easier to be manipulated/interacted with, as this functional interaction is what enables fidelity in reading a system’s memory’s meaning. Thus, vulnerability is a necessary part of efficient spreading of content from mind to mind.

- As pointed out by Wesley Clawson, there are gradations of “invasiveness” for the interaction (as distinct from purely observational read operations). Thus, the observer provides simple stimuli, or changes setpoints, or gives inputs calculated to radically change the inner structure of the agent (“the thought that breaks the thinker” in its most extreme form) – different degrees of interaction to improve understanding of the system at hand.

- The further right you go on the Spectrum of Persuadability, the less those systems are readable purely by non-destructive reads (observation, not interaction). As this property increases, so does the aspect that whatever you say about this system you are really saying about the dyad of “you+system”, not about the system itself. This is consistent with TAME and the notion of polycomputing, where the observer’s perspective and their own cognitive competencies are a critical part of a view of the system (and there is no 1 objective, correct view – it’s observer-relative).

- As Thomas Varley points out, issues of dynamical degeneracy (causal emergence) and time-symmetric chaos, as seen in the work of Hoel, Tononi, Olaf Sporns, and others, give additional reasons why the meaning inherent in such physiological engrams cannot be read out by external observations. Additional reading on all this, courtesy of Thomas:

- Lynn, C. W., Cornblath, E. J., Papadopoulos, L., Bertolero, M. A., & Bassett, D. S. (2021). Broken detailed balance and entropy production in the human brain. Proceedings of the National Academy of Sciences, 118(47)

- Roldán, É., & Parrondo, J. M. R. (2012). Entropy production and Kullback-Leibler divergence between stationary trajectories of discrete systems. Physical Review E, 85(3), Article 3

- Ay, N., & Polani, D. (2008). Information flows in causal networks. Advances in Complex Systems, 11(01), 17–41

- So then, why is it pretty easy to understand (even if not perfectly) the content of your own mind and the engrams of memories you formed? It’s precisely because the relationship with your own mind is constant functional intervention. Via active inference and other strategies, you (the emergent virtual governor) are constantly intervening in your own cognitive medium (which is harder for others to do from the outside). The internal perspective is privileged for this reason – because it’s an active, functional one (not a pure observational system of read-decode).

- The above is consistent with the increasing realization that recalling your own memories is also a perturbative process – memories are not read out non-destructively, but are actually modified by recall. We revise our memories by recalling them.

- So where is the memory in such a system? It’s in the relationship between it and the observer.

- One way to recover the memories is to interact with a copy or a model of the system, instead of the system itself. And of course this is what we (as biological systems, not just brains) do all the time, because we have internal self-models with which to do simulated experiments to know what we think.

- The level of observation is crucial. Even a traditional, easily-recognizable memory element (e.g., flip flop) looks strange if examined at the level of the atomic particles: none of them have been marked or changed by storing data in the register – they are still factory-state particles. So where is the memory then, if you can’t scratch it onto the copper atoms? It’s present in the higher-level configuration of the system. Memories are always present at one level, and inscrutable at lower levels of observation (bringing us back to the relationship between a system, its own private memories, and an external observer trying to decode them, and needing to pick the right level).

Even something as simple as a small gene regulatory network model has interesting implications for deep questions about memory and the selves to whom memories belong.

Leave a Reply